Explainable Deep Learning for Brain Cancer Classification: A Comparative Study of Transfer Learning and Training-from-Scratch Models Using SHAP and LIME

DOI:

https://doi.org/10.66021/pakmcr832Keywords:

Brain Tumor Detection; MRI; CNN; Transfer Learning; VGG16; LIME; SHAPAbstract

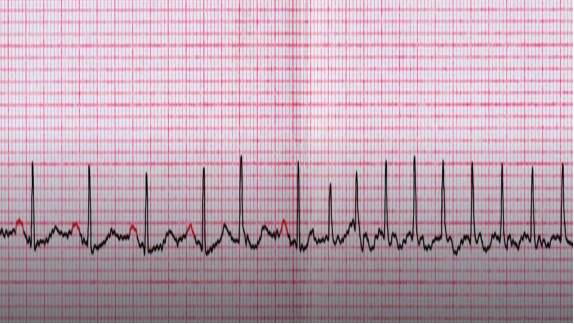

Diagnosing brain cancer with Magnetic Resonance Imaging (MRI) is not only critical but also a very difficult and time-consuming task in medical analysis of images. Despite the Deep Learning (DL) model's performance with interpretability, the DL still dramatically enhances the ability to automate the procedure. In this research study, we first trained a convolutional neural network (CNN) from scratch on 2,000 MRI images, comprising 1,000 tumors and 1,000 non-tumors, and then tested it on 600 images, comprising 300 tumors and 300 non-tumors. The scratch model (CNN) achieved 98% accuracy with sensitivity 100%, precision 96%, F1-score 98%, and specificity 96%. To enhance performance, we proceeded to transfer learning using four models: DenseNet121, InceptionV3, ResNet50, and VGG16. Among these architectures, VGG16 achieved the best results, achieving perfect classification across all evaluation metrics (e.g., accuracy, precision, sensitivity, F1-score, and specificity: 100%). To discuss the challenges in interpretability, we also applied model explainability techniques to visualize the result-making procedures of VGG16, e.g., Shapley Additive Explanations (SHAP) and Local Interpretable Model-agnostic Explanations (LIME). Both local and global insights from these explainability methods were provided, highlighting critical tumor regions, validating the model's predictions, and enhancing trust in its real-world clinical applications. The results confirmed that VGG16 achieved the highest performance and provided interpretable explanations, making it a robust and trustworthy model for automating the brain cancer diagnosis process. asifrahman557/Explainable-AI-using-SHAP-LIME